Background

As organizations scale and evolve, their application landscape often grows organically, resulting in overlapping systems, rising costs, and increasing operational complexity. Over time, this sprawl limits business agility, slows innovation, and makes it harder to align technology investments with strategic priorities. Leaders are challenged not just to modernize applications, but to do so in a way that delivers measurable business outcomes without disrupting ongoing operations.

This article presents a practical use case that applies the IBM Garage methodology to application rationalization and modernization. It demonstrates how a structured, collaborative, and iterative approach helps organizations assess what truly matters, simplify their application portfolio, and focus investment on the capabilities that drive business value. By combining design thinking, co-creation, and continuous delivery, the IBM Garage approach enables teams to move from analysis to action—reducing redundancy, improving time to market, and building a foundation that can adapt to future change.

Through this use case, the article shows how application rationalization becomes not just a technology exercise, but a business-led transformation that supports growth, resilience, and long-term competitiveness.

What is IBM Garage Methodology

IBM Garage Methodology is a transformative framework designed to help enterprises innovate, build, and scale solutions rapidly while minimizing risk and maximizing business value. Born from IBM’s extensive experience in digital transformation and agile practices, the methodology combines design thinking, agile development, DevOps, and site reliability engineering (SRE) into a cohesive approach that emphasizes co-creation, continuous learning, and sustainable delivery. It’s designed to work from first idea through implementation to cultural change, integrating innovation into day-to-day operations while meeting organizational requirements

Foundational Principle — Outcome First, Technology Second

Before any phase begins, the Garage approach requires a single agreed statement: what does a successful rationalized portfolio look like in Year 3, expressed in business terms, not IT terms. The rationalization numbers we discussed — 500 to 200 applications, $15M annual savings, 4-month time-to-market — become the north star that every sprint, every wave, and every governance gate is measured against. Without this anchoring, rationalization programmes drift into technology exercises that deliver infrastructure savings but fail to move the business metrics that the CFO actually cares about.

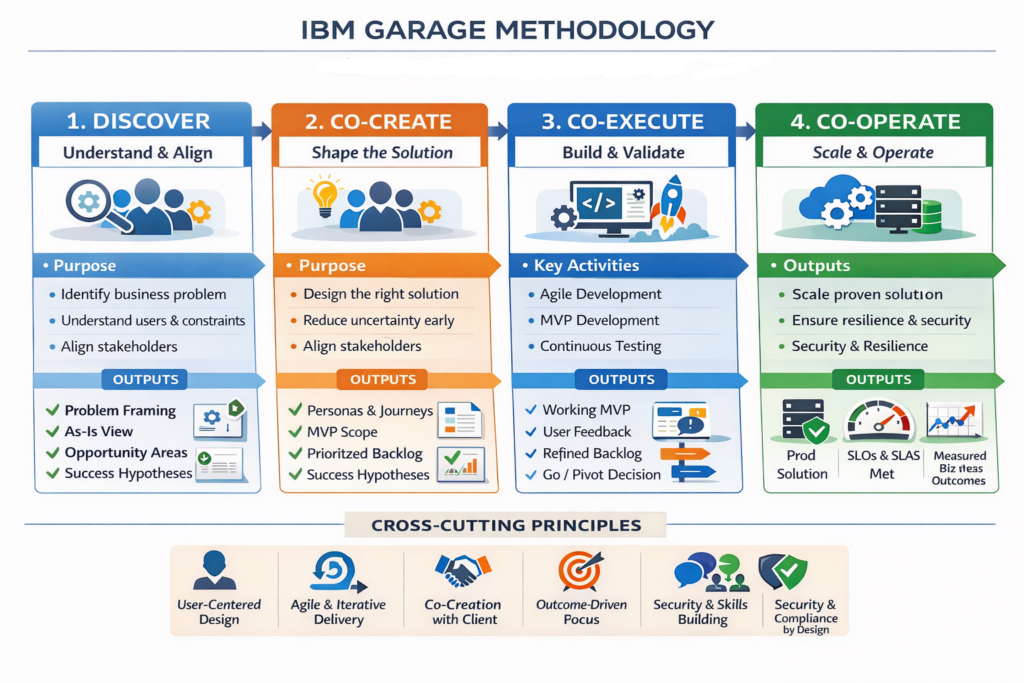

The methodology is structured into four major phases, each of which can be used independently if needed:

Phase 1 — Discover: The Evidence Engine (Weeks 1–8)

- Team composition : The Discover phase runs with a deliberately small, high-skill team — typically 6 to 8 people. It includes an IBM Garage architect leading the technical assessment, an enterprise design thinking facilitator running stakeholder workshops, two business analysts conducting interviews and cost modelling, and a client-side programme director who owns the internal relationships. The small size is intentional: large discovery teams generate large volumes of inconsistent data. The Garage model prioritizes depth over breadth in the initial scan.

- Automated discovery first: The first two weeks are tooling-led. A tooling such as IBM’s Consulting Cloud Accelerator (ICCA) is deployed to scan the application estate automatically — pulling data from the CMDB, cloud billing consoles, Active Directory login logs, software asset management tools, and infrastructure monitoring platforms. This produces the raw portfolio inventory: every application, its hosting cost, its license cost, its last deployment date, and its login activity over the preceding 90 days. Critically, this automated scan takes two weeks, not two months — it produces an imperfect but directionally correct picture that is good enough to focus the human investigation that follows.

- Stakeholder interviews as the validation layer: Weeks 3 and 4 are interview-led. The design thinking facilitator runs structured discovery sessions with business domain owners — Finance, HR, Sales, IT Operations, and Security. The interview protocol is not “tell us about your applications.” It is “walk us through how you do your most important work, and show us the tools you use.” This surfaces two categories of finding that automated tools never capture: applications that have high login activity but deliver no real business value (because users are forced to log in by a broken process), and applications with low login activity that are actually business-critical batch processors running overnight. Both categories would be misclassified by the automated scan alone.

- Technical health scoring: Weeks 5 and 6 produce the technical health dimension. Each application is scored across five axes: platform supportability (is the underlying OS, database, or framework still vendor-supported), security posture (known CVEs, patching currency), integration complexity (how many systems depend on it), deployment agility (how long does a release take), and scalability (can it handle peak demand). The scoring is calibrated so that an assessor with three hours per application can produce a defensible score — the Garage model explicitly rejects comprehensive deep-dives on every application because the cost of that analysis exceeds the value of the precision it produces.

- The portfolio heatmap output: By Week 8, the Discover phase produces a single deliverable: a portfolio heatmap plotting every application on two axes — business value (vertical) and technical health (horizontal). This is the portfolio quadrant we discussed in the rationalization blueprint. Every application falls into one of four zones: invest and retain (high value, healthy tech), refactor or replace (high value, poor tech), optimize or tolerate (low value, healthy tech), retire (low value, poor tech). The heatmap is the evidence base for everything that follows. It is also the political document that makes difficult conversations possible: a business unit leader cannot argue that their zombie application is strategically important when the heatmap shows zero active users and an unsupported platform.

Phase 2 — Co-create: The Decision Factory (Weeks 9–20)

- The co-creation war room: The Garage model runs Co-create from a dedicated physical or virtual war room where the Rationalization team and the client’s programme team work side-by-side, full-time, for the duration of the phase. This is not advisory engagement — it is embedded co-creation. The Rationalization team brings the methodology and the analytical tools; the client team brings the business context and the organizational authority. Neither can succeed without the other, and the war room model makes that interdependency structural rather than aspirational.

- Application-by-application decision workshops: Weeks 9 through 14 run a series of structured decision workshops — one per business domain — working through the portfolio heatmap application by application. Each workshop follows a consistent 90-minute protocol. The first 30 minutes present the application’s position on the heatmap with the supporting evidence. The next 30 minutes explore the disposition options using the 7Rs framework, with a pre-built financial model showing the TCO comparison for each option. The final 30 minutes produce a decision, a business case owner, and a target date. This cadence means the team works through 15 to 20 applications per workshop, covering the full 500-application estate across 25 to 30 workshops over six weeks.

- Business case development: Weeks 15 and 16 are dedicated to building the financial models for the rationalization roadmap. Each of the seven rationalization actions — Retire, Replace, Consolidate, Rehost, Replatform, Refactor, Retain — has a standard Garage business case template with pre-populated industry benchmark costs and savings ranges. The team populates these templates with the client’s actual cost data from the Discover phase, producing application-level business cases that roll up into the programme-level financial model. The key discipline here is independent validation: the Garage model requires a second reviewer who was not involved in building the business case to challenge every material assumption before it is presented to the steering committee. This prevents the optimism bias that inflates savings projections in every rationalization programme.

- The 3-year roadmap : Weeks 17 and 18 sequence the approved actions into a phased delivery roadmap. The sequencing logic follows three rules: quick wins first (retire zombie apps in Month 1, not Month 18), dependency-respecting (do not retire App A before migrating App B that feeds from it), and wave-sized (no single wave has more than 30 applications in flight simultaneously, because beyond that the programme loses the ability to track and manage risk). The roadmap is built in a visual tool — typically a combination of Miro for the dependency mapping and a Gantt tool for the timeline — so that the steering committee can interrogate it interactively rather than reviewing a static document.

- Stakeholder commitment and the governance gate: Weeks 19 and 20 are the political phase. The programme director presents the roadmap and financial model to the executive steering committee. This is not a status update — it is a formal investment decision. The ask is specific: approve the 3-year roadmap, commit the Phase 1 budget of $500K to $1M, establish the programme governance structure, and sign the escalation protocol that gives the programme director authority to make decisions below a defined threshold without steering committee sign-off. Without that last element, rationalization programmes stall because every difficult decision gets escalated and every escalation takes four weeks. The Garage model makes the governance structure explicit and contractual before execution begins.

Phase 3 — Co-execute: The Delivery Engine (Months 6–18)

- Sprint cadence and wave structure: Co-execute runs on a two-week sprint cadence within a three-wave structure. Each sprint has a fixed rhythm: planning on Monday morning, daily standups, mid-sprint review on Wednesday of Week 2, sprint demo and retrospective on Friday of Week 2. The wave structure groups sprints into logical delivery clusters — Wave 1 covers the first 6 months, Wave 2 the next 6, Wave 3 the final 6 months of Co-execute. Each wave has a single, clearly stated business outcome: Wave 1 is “generate $3M in savings and prove the programme delivers.” Wave 2 is “consolidate the major platforms and exit the datacenter.” Wave 3 is “migrate to cloud and validate the integration architecture.”

- The Wave 1 playbook — quick wins: Wave 1 is deliberately engineered for speed and visible success. The Garage principle here is that the programme must produce proof within 90 days or it will lose executive attention. The Wave 1 playbook has five plays that can all be run in parallel

- The zombie app retirement play runs the automated scan output through a 30-day monitoring protocol — the application is turned off, a redirect message is shown to any user who tries to access it, and after 30 days with zero complaints the application is formally decommissioned and its infrastructure reclaimed. This play can retire 30 to 50 applications in the first quarter with minimal risk because the evidence of zero usage was already established in Discover

- The vendor renegotiation play uses the cost and usage data from Discover as leverage in renewal negotiations. A vendor whose 500-seat license is 36% utilized has no negotiating position when the client presents the usage data and signals that they are evaluating alternatives. The Garage model standardizes this as a four-week negotiation cycle per vendor, running five vendors in parallel in Wave 1.

- The shadow IT elimination play shuts down ungoverned SaaS applications by coordinating with IT Security (who can block payment methods and SSO access) and Finance (who can identify the cost centres funding the spend). This is faster than migration and produces immediate savings, but requires the cross-functional authority that the governance structure established in Co-create.

- The low-complexity SaaS replacement play identifies applications where a direct SaaS equivalent exists and the migration complexity is low — typically document management, project tracking, survey tools, and expense management. The Garage team uses a pre-built SaaS evaluation scorecard to select the replacement, a data migration playbook to move the data, and a change management template to communicate to users. The target is a four-sprint migration cycle per application.

- The Wave 2 playbook — platform consolidation: Wave 2 operates at larger scale and higher risk. The CRM consolidation, ERP rationalization, and database consolidation programmes all run here. The Garage model treats each consolidation as a separate programme track with its own sprint team, its own stakeholder group, and its own dependency management. The programme office coordinates across tracks rather than running them sequentially, because sequential execution would extend Wave 2 from 6 months to 18 months. The critical Garage practice in Wave 2 is the strangler fig pattern for major platform replacements. Rather than a big-bang cutover from the old CRM to the new one, the Garage team builds the new platform incrementally alongside the old one, migrating user groups and business processes one at a time over multiple sprints. The old platform is strangled gradually rather than replaced in a single risky event. This approach doubles the migration timeline compared to big-bang but reduces the probability of catastrophic failure from roughly 40% to under 5% based on engagement data.

- Wave 3 and the datacenter exit: Wave 3 is where the cloud migration executes. The Garage model uses a cloud migration factory approach — a standardized pipeline that takes an application from assessment through containerization, testing, and production deployment in a repeatable 6-sprint cycle. The factory model means the team’s productivity accelerates over the wave as the pipeline becomes more efficient, rather than declining as teams encounter one-off complications with each application. The datacenter exit is the Wave 3 milestone that concentrates the most risk. The Garage approach handles this through a soft exit strategy: the target datacenter exit date is set 3 months after the last application migration, not on the same day. This buffer absorbs the inevitable tail of applications that take longer than planned and prevents the datacenter exit deadline from forcing premature migrations.

- The alignment gates in practice: Between each wave — and at the midpoint of Wave 2 — the Garage model runs a formal alignment gate. This is a half-day steering committee session that reviews three things: actual savings delivered versus the business case commitment, the dependency map for the next wave showing no unresolved blockers, and the risk register with active mitigations. The gate produces one of three outcomes: proceed as planned, proceed with adjustments, or pause for reassessment. The Garage model is explicit that pausing at a gate is a sign of programme health, not failure. A programme that never pauses at a gate is either delivering everything perfectly (rare) or not being honest about its risk register (common).

4. Phase 4 — Co-operate: The Sustained Value Engine (Month 19 onward)

- The hypercare window: The first 90 days of Co-operate are the hypercare window — the highest-risk period for any rationalized portfolio because it is when the migrated applications face real production load for the first time. The Garage model assigns a dedicated hypercare team that is distinct from the Wave 3 delivery team, because the skills required to stabilize a production system under load are different from the skills required to migrate it. The hypercare team monitors a defined set of SLIs (Service Level Indicators) for each migrated application and has a documented escalation path for every failure scenario. The hypercare window closes when 30 consecutive days pass with no P1 or P2 incidents.

- Preventing portfolio re-accumulation: This is arguably the most strategically important activity in the entire Garage framework — and the one most often skipped by programmes that run out of energy by Year 3. Portfolio re-accumulation happens because the organizational incentives that created 500 applications in the first place are still in place after rationalization. Business units still want their own tools. Procurement still approves SaaS subscriptions without architecture review. Shadow IT still proliferates when the sanctioned tools do not meet user needs. The Garage model addresses this through three structural changes that are embedded in Co-operate: a mandatory architecture review board approval for any new application or SaaS subscription above a defined cost threshold, a quarterly portfolio health review that flags any application that crosses the zombie threshold (90 days without active use), and an application lifecycle policy that requires every new application to have a sunset date defined at the time of approval. These are governance changes, not technology changes, and they require the sustained executive sponsorship that the Garage model works to maintain throughout the programme.

- Continuous modernization as a standing capability: The final Garage insight in Co-operate is that rationalization is not a project — it is a capability. The 200-application portfolio of Year 3 will begin accumulating technical debt and business irrelevance from Year 4 onward unless the organization has built the internal capability to manage it continuously. The Garage model’s final deliverable is not a rationalized portfolio — it is a team of people with the skills, tools, and authority to rationalize it again when needed, without external help. The capability transfer that runs throughout Co-create and Co-execute — embedding Garage practices into the client’s own architects, programme managers, and business analysts — is what makes the investment sustainable and the ROI defensible over a five-year horizon.

Key Principles Behind the Approach

- Iterative & Agile: Short cycles of build, test, and learn enable rapid feedback and early course correction.

- User-Centered: Deeply focuses on the needs of end users (whether defense personnel or public sector staff) to shape solutions that actually deliver value.

- Collaborative: Cross-disciplinary teams work together throughout, blurring traditional client vs. vendor boundaries.

- Business Outcome-Oriented: The goal isn’t technology for its own sake — tools and tech serve the solution and strategic outcomes.

- Culture & Capability Building: Emphasis on organizational change, not just product delivery, enabling teams to sustain innovation beyond the engagement.

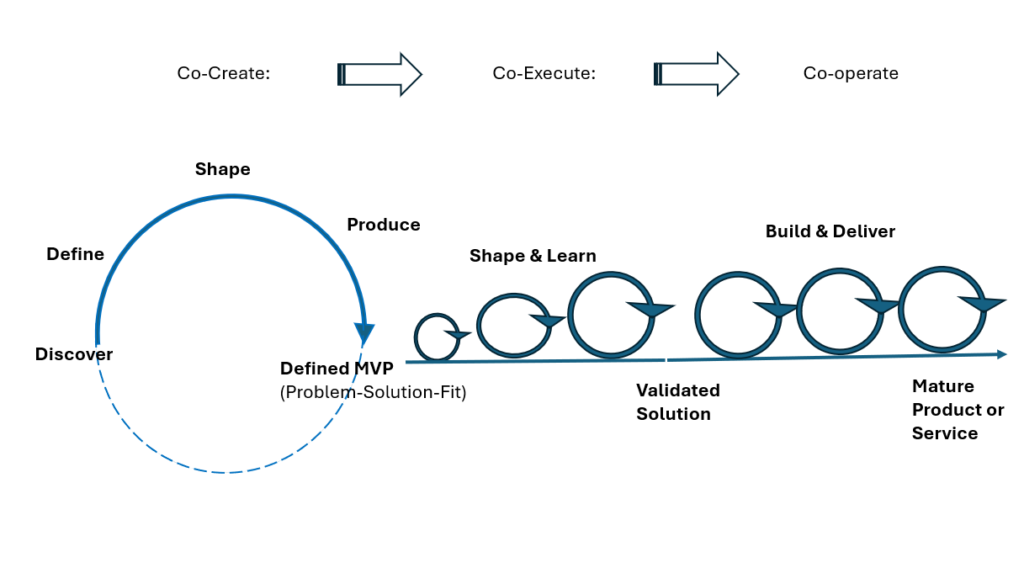

Following diagram illustrates the purpose of each phase, key activities involved and the outputs

Garage Methodology, includes all the steps required for successful innovation and a smooth and seamless end-to-end process. “From the initial idea and scaled implementation to the necessary cultural shift, this approach is based on agile methods that, through short cycles and continuous testing, lead to rapid results and identify weaknesses and necessary course corrections early on. The result is a market-ready product precisely tailored to the specific challenges of its users.” The following diagrams with the circles narrate the milestones as well as circles demonstrating the iterative agile approach as it progress though the phases, with clear validation of results

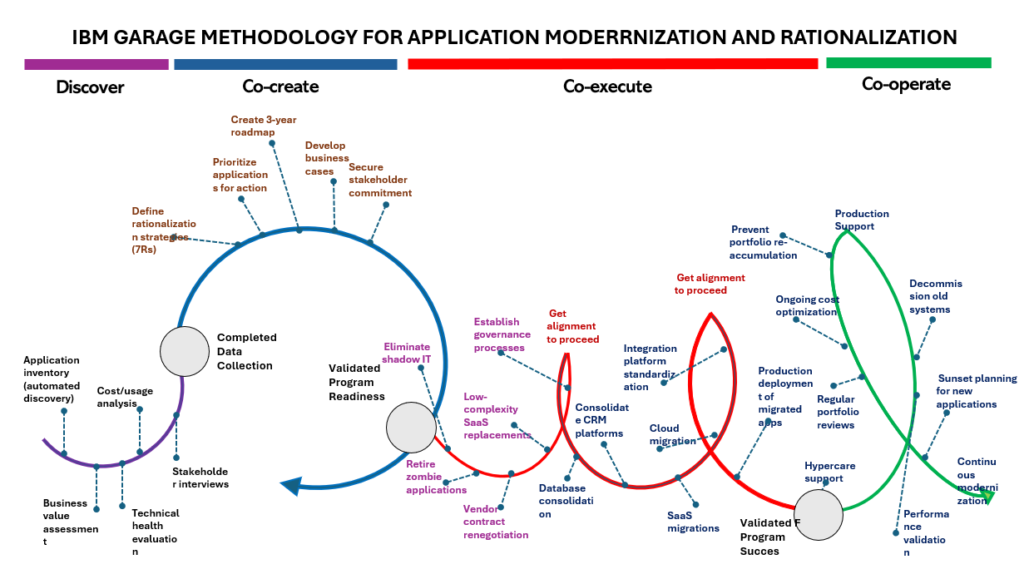

Garage Methodology applied to Application Modernization and Rationalization

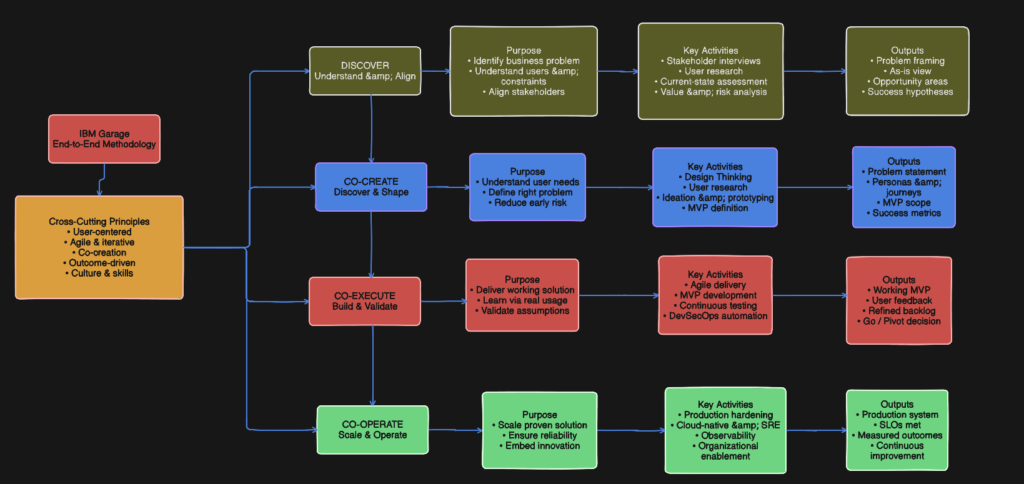

Now let us analyze the approach. The diagram maps the entire Garage journey across four phases, each with its own visual motion metaphor: a discovery loop, a co-create spiral, co-execute waves, and a co-operate arc. Here is what each phase contains and means.

Phase 1 — Discover

The discover phase is shown as a set of parallel activity streams feeding into a central Completed Data Collection milestone. The activities running in parallel are:

- Application inventory via automated discovery tools — the portfolio scan that identifies every application in the estate

- Cost and usage analysis — what each application costs to run and how actively it is being used

- Business value assessment — scoring each application against strategic importance and business outcome contribution

- Technical health evaluation — assessing platform age, supportability, security posture, and technical debt

- Stakeholder interviews — the human layer that automated tools cannot replace, surfacing the organizational context around each application

All five streams converge into Completed Data Collection, which is the evidence base that funds every decision that follows. Nothing in Co-create can proceed without this foundation.

Phase 2 — Co-create

Co-create is shown as a large circular loop — deliberately so, because it is an iterative validation cycle, not a linear sequence. The outputs that emerge from the loop are:

- Define rationalization strategies using the 7Rs framework (Retire, Replace, Rehost, Replatform, Refactor, Re-architect, Retain)

- Prioritize applications for action — ranking the portfolio by urgency, value, and effort using the priority scoring model

- Create the 3-year roadmap — sequencing the rationalization actions across phases

- Develop business cases — quantifying the cost and savings for each action

- Secure stakeholder commitment — the governance and sponsorship gate

The loop feeds into a second milestone: Validated Program Readiness. This is the critical governance checkpoint — the moment where the steering committee formally approves the programme to proceed. Nothing moves to Co-execute without this validation. The arrow pointing back from Validated Program Readiness toward Completed Data Collection represents the feedback loop — if the data is insufficient or the business cases do not hold, the programme loops back to Discover rather than proceeding on weak foundations.

Phase 3 — Co-execute

Co-execute is shown as a series of three interlocking wave loops — visually representing overlapping delivery sprints where each wave builds on the previous one. The activities are sequenced deliberately from low risk to high complexity:

First wave (lowest risk, immediate value):

- Retire zombie applications — zero usage, zero justification, immediate savings

- Vendor contract renegotiation — no migration required, pure commercial negotiation

- Eliminate shadow IT — removing ungoverned spend

- Low-complexity SaaS replacements — swap simple custom apps for off-the-shelf alternatives

Second wave (moderate complexity):

- Database consolidation — reducing the 400-instance estate

- Consolidate CRM platforms — the major platform consolidations

- Establish governance processes — embedding the programme office cadence

Third wave (highest complexity):

- Cloud migration — moving workloads to cloud infrastructure

- SaaS migrations — larger, more complex platform replacements

- Integration platform standardization — rationalizing the integration layer

Each wave has a Get alignment to proceed gate before it begins — shown in red in the diagram. This is Garage discipline in practice: no wave starts without explicit stakeholder sign-off that the previous wave’s outcomes are validated. This is the de-risking mechanism that prevents the “big bang” failure mode.

The phase closes with Validated Program Success — the formal confirmation that the Co-execute waves have delivered against the business case before Co-operate begins.

Phase 4 — Co-operate

Co-operate is the long-term operational arc — shown as a smooth, sustained curve rather than loops or spirals, representing steady-state operation rather than iterative sprints. The activities here are ongoing rather than time-boxed:

- Production deployment of migrated applications — stabilizing everything that was moved in Co-execute

- Hypercare support — the intensive post-migration support window for critical applications

- Production support — transitioning to normal operational run

- Prevent portfolio re-accumulation — the governance discipline that stops the portfolio from growing back to 500 apps within three years

- Ongoing cost optimization — continuous license right-sizing, contract reviews, cloud cost management

- Regular portfolio reviews — the quarterly cadence that keeps the rationalized estate healthy

- Performance validation — confirming that the modernized applications are delivering the expected business outcomes

- Sunset planning for new applications — embedding decommission planning into the application lifecycle from day one, so future zombie apps are prevented rather than accumulated

- Decommission old systems — the tail-end retirement of anything still running from the pre-rationalization estate

- Continuous modernization — the recognition that rationalization is not a project with an end date but an ongoing discipline

From the Diagram you can distinctively observe the following

- The feedback loop between Co-create and Discover is the most important structural insight in the diagram. It shows that if the business cases developed in Co-create do not stack up, the programme goes back to Discover — not forward. This reflects real programme discipline: proceeding on weak foundations is the most common cause of rationalization programme failure

- The wave structure in Co-execute correctly sequences low-risk quick wins before high-complexity migrations. This is the “prove value first” principle made visual — the early waves generate the savings and organizational confidence that fund and justify the later, riskier waves.

- The “prevent portfolio re-accumulation” activity in Co-operate is often missing from rationalization frameworks. It acknowledges that rationalization without sustained governance simply recreates the problem within five years. The Garage model builds the prevention mechanism into the methodology itself.

- The alignment gates (shown in red as “Get alignment to proceed”) between waves represent the stage-gate funding model — each phase only proceeds with explicit approval, which is the primary mechanism for controlling cost overruns and business disruption risk.

Conclusion

In this article we have been able to describe the IBM Garage Methodology in detail and illustrate how it has been applied to a application rationalization and modernization program. This article acknowledges all copyrights associated with the methodology are that of IBM